How We Cut Our LLM Costs 60% With Request Routing

A practical breakdown of how intelligent routing, caching, and model selection through an LLM gateway can dramatically reduce your AI infrastructure costs.

Most teams start their AI journey the same way: pick a flagship model, point all requests at it, and watch the bill climb. It works — until you're spending thousands per month and realize that 70% of your requests didn't need a $10/M-token model in the first place.

Here's how a gateway-based approach to LLM routing can cut costs by 60% or more without sacrificing quality where it matters.

The Problem: One Model for Everything

Consider a typical AI-powered SaaS application that handles 10,000 requests per day with a mix of tasks:

- Classifying support tickets

- Generating email drafts

- Summarizing documents

- Answering complex technical questions

- Extracting structured data from forms

If you run everything through GPT-5 ($1.25 input / $10.00 output per 1M tokens), you're paying flagship prices for tasks that a model 10x cheaper could handle just as well.

Monthly cost with GPT-5 only: ~$1,875/month (assuming 1K input + 500 output tokens average)

Strategy 1: Route by Complexity

Not every request needs your best model. By categorizing requests and routing them to appropriate model tiers, you immediately cut costs on the majority of your traffic.

| Request Type | % of Traffic | Model | Cost per 1M Output |

|---|---|---|---|

| Simple (classification, extraction) | 70% | GPT-4.1 Nano | $0.40 |

| Moderate (summarization, drafts) | 20% | Gemini 2.5 Flash | $2.50 |

| Complex (reasoning, analysis) | 10% | GPT-5 | $10.00 |

Monthly cost with routing: ~$270/month

That's an 85% reduction from using GPT-5 for everything — and users won't notice the difference on simple tasks.

Strategy 2: Response Caching

Many LLM requests are repetitive. Support ticket classifiers, FAQ responses, and template-based generations often produce identical or near-identical outputs for similar inputs.

With gateway-level caching:

- Identical requests return cached responses instantly

- Cache hit rates of 15-30% are common for production apps

- Cached responses have zero token cost and near-zero latency

A 20% cache hit rate on our 10,000 daily requests means 2,000 fewer billable requests per day.

Additional savings from caching: ~15-20% on top of routing savings

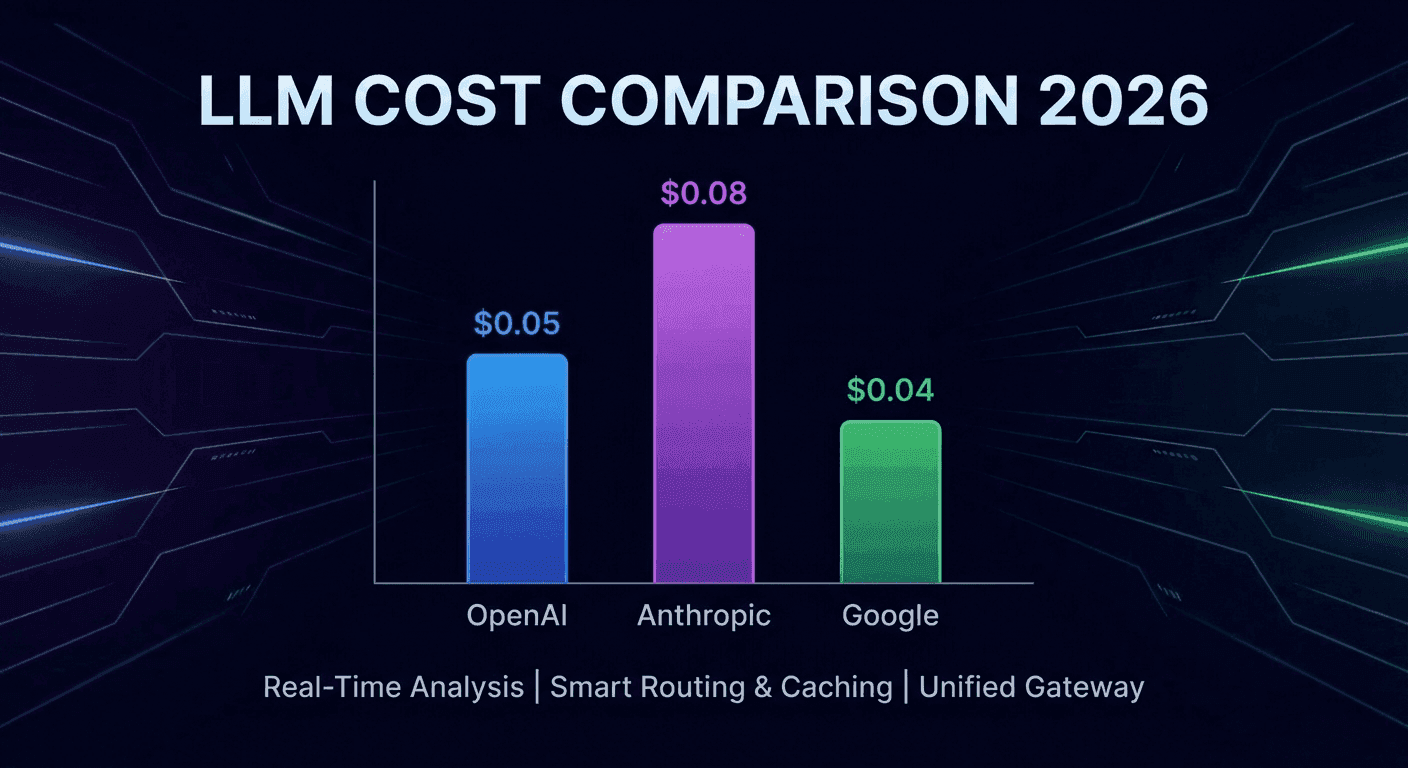

Strategy 3: Provider Arbitrage

The same model quality tier is priced differently across providers. An LLM gateway lets you compare and switch without code changes:

| Tier | Option A | Option B | Savings |

|---|---|---|---|

| Flagship | Claude Opus 4.6 ($25/M out) | GPT-5 ($10/M out) | 60% |

| Mid-tier | Claude Sonnet 4.5 ($15/M out) | Gemini 2.5 Flash ($2.50/M out) | 83% |

| Budget | Claude Haiku 4.5 ($5/M out) | GPT-4.1 Nano ($0.40/M out) | 92% |

When you're locked into a single provider, you can't take advantage of pricing differences. A gateway gives you the flexibility to pick the best price-to-quality ratio for each use case.

Strategy 4: Automatic Fallback

Provider outages happen. Without a fallback strategy, an outage means downtime — and downtime means lost revenue that dwarfs any LLM cost savings.

With gateway-level fallback:

- Primary request goes to your preferred provider

- If it fails, the gateway automatically retries with an alternative provider

- Your application stays up, and users never notice

This isn't just a cost strategy — it's a reliability strategy that happens to also give you pricing flexibility.

The Combined Effect

Putting it all together for our 10,000 requests/day scenario:

| Strategy | Monthly Cost | Savings vs. Baseline |

|---|---|---|

| Baseline (GPT-5 only) | $1,875 | — |

| + Complexity routing | $270 | 85% |

| + Response caching (20%) | $216 | 88% |

| + Provider arbitrage | ~$180 | 90% |

The exact numbers depend on your traffic patterns, but the principle holds: most teams are dramatically overspending on LLM costs because they're using a single expensive model for everything.

Getting Started

LLM Gateway handles all of this out of the box:

- Smart routing across 300+ models from every major provider

- Response caching with Redis for instant repeated queries

- Automatic fallback when providers go down

- Cost tracking so you can see exactly where your money goes

1curl https://api.llmgateway.io/v1/chat/completions \2 -H "Authorization: Bearer YOUR_API_KEY" \3 -H "Content-Type: application/json" \4 -d '{5 "model": "gpt-4.1-nano",6 "messages": [{"role": "user", "content": "Classify this ticket: My password reset email never arrived"}]7 }'1curl https://api.llmgateway.io/v1/chat/completions \2 -H "Authorization: Bearer YOUR_API_KEY" \3 -H "Content-Type: application/json" \4 -d '{5 "model": "gpt-4.1-nano",6 "messages": [{"role": "user", "content": "Classify this ticket: My password reset email never arrived"}]7 }'Switch models by changing a single string. No SDK changes, no code rewrites.

Start saving on LLM costs | Compare model pricing | Read the docs