LLM Gateway vs Direct API: When the Provider SDK Stops Scaling

Calling OpenAI or Anthropic directly is the right first call. Here's the honest case for when a gateway starts paying for itself — and when you don't need one yet.

Latest news and updates from LLM Gateway

Calling OpenAI or Anthropic directly is the right first call. Here's the honest case for when a gateway starts paying for itself — and when you don't need one yet.

How LLM response caching actually works, where it helps, where it doesn't, and how to turn it on without rewriting your app.

A practical guide to forecasting LLM costs: the token formula, real-world examples across GPT-5.4, Claude, and Gemini, and a free calculator to run the numbers.

A straightforward comparison of LLM Gateway and LiteLLM — features, operational cost, and trade-offs — so you can pick the right one for your stack.

A practical framework for picking the right model — based on task type, budget, latency requirements, and context window — instead of chasing benchmarks.

A straightforward comparison of LLM Gateway and OpenRouter — features, pricing, and trade-offs — so you can pick the right one for your stack.

What LLM guardrails are, why they matter in production, and how to implement content safety without building it yourself.

An honest comparison of the top AI gateways — features, pricing, and trade-offs — so you can pick the right one for your stack.

We compared DeepSeek V3.2 pricing across every major API provider. Here's the definitive ranking — and how our Token Cost Calculator can help you estimate exact savings.

Side-by-side pricing comparison of GPT-5, Claude Opus 4.6, and Gemini 2.5 Pro with real cost calculations for production workloads.

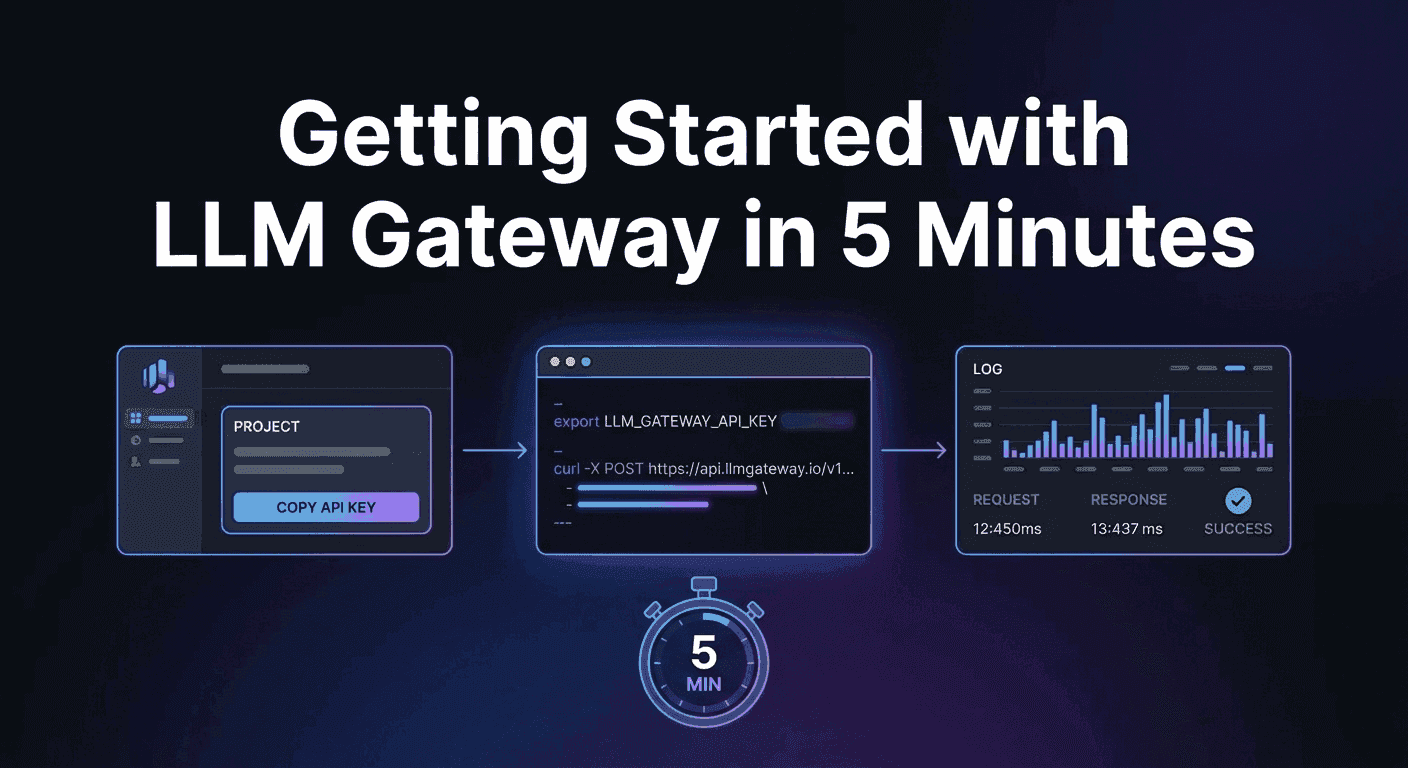

A step-by-step guide to making your first LLM API request through LLM Gateway — from signup to seeing results in your dashboard.

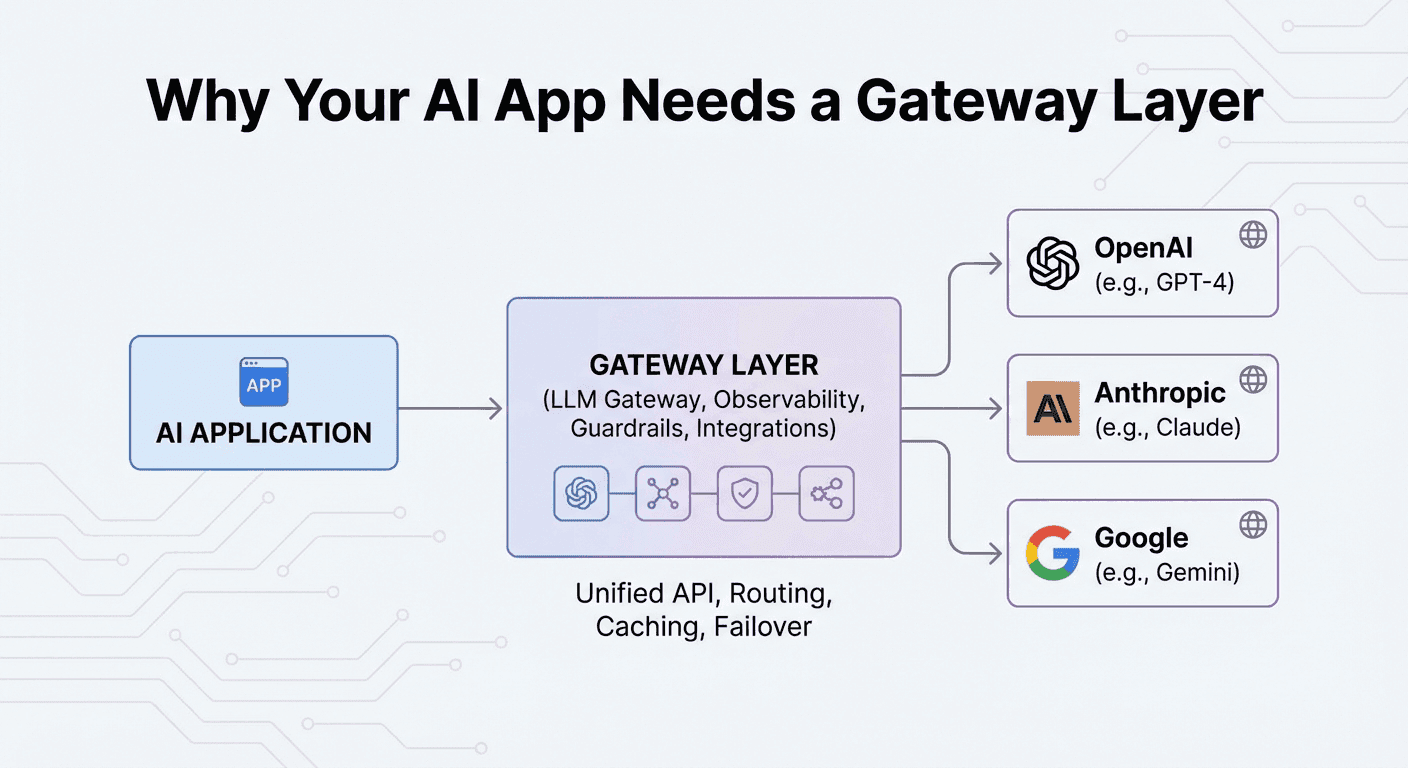

What an LLM gateway does, why it matters, and how it lets you ship AI features faster by abstracting away provider complexity.

Learn what an LLM Gateway is, why you need one, and how it simplifies integrating, managing, and deploying large language models in production.

Use GPT-5, Gemini, or any model with Claude Code. Three environment variables, zero code changes.

Run LLM Gateway on your own infrastructure in under 5 minutes. Full control, zero platform fees.